Published

- 10 min read

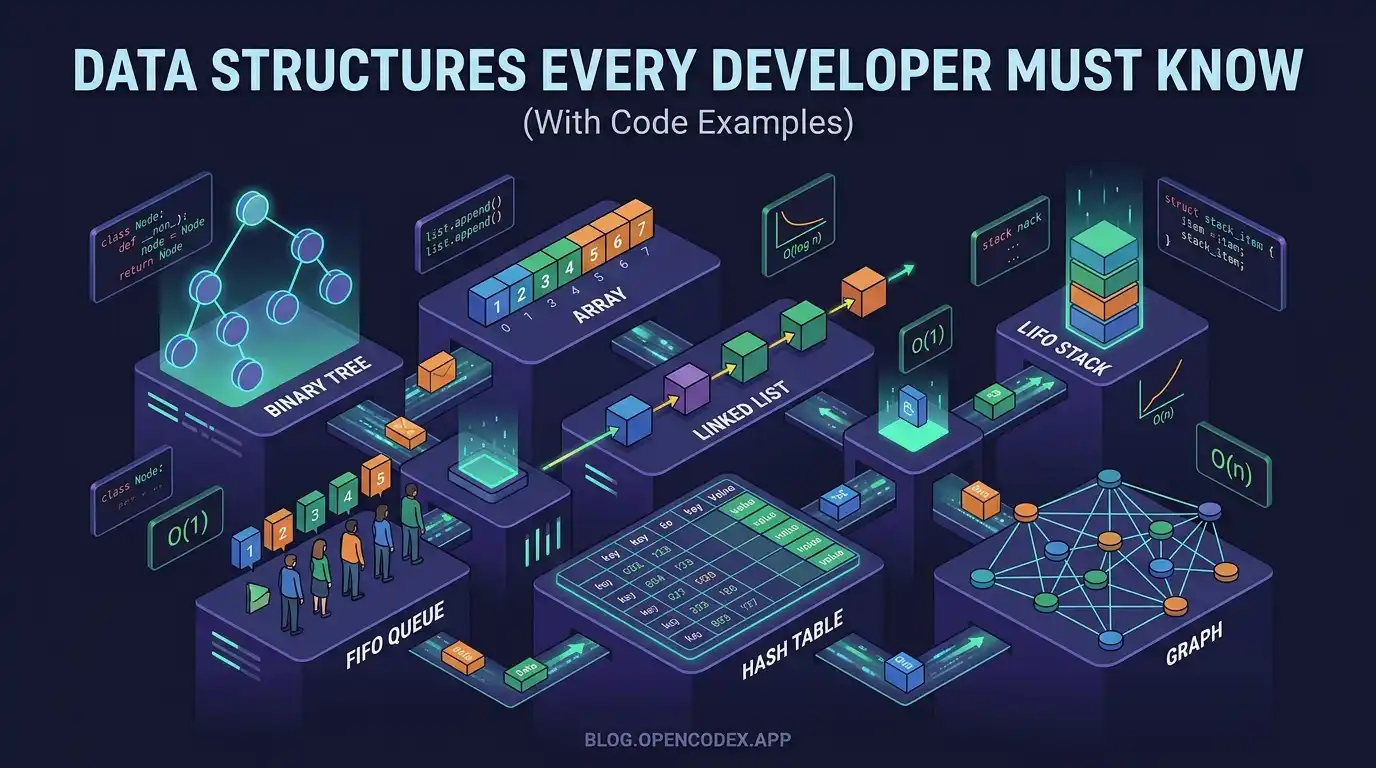

Data Structures Every Developer Must Know (With Code Examples)

Data Structures Every Developer Must Know (With Code Examples)

Data structures are the building blocks of software engineering. Whether you’re writing a simple script or training a machine learning model, the data structures you choose determine how efficiently your code runs—and sometimes whether it runs at all.

If you’ve ever wondered why some solutions are lightning-fast while others crawl, the answer almost always lies in choosing the right data structure for the problem. In this guide, we’ll break down the essential data structures every developer should master, with clear explanations, time complexity analysis, and practical code examples.

This guide is perfect for self-taught developers, computer science students, and competitive programmers preparing for technical interviews.

Índice

- Why Data Structures Matter

- Array: The Foundation

- Linked Lists: Dynamic Chains

- Stacks: Last In, First Out

- Queues: First In, First Out

- Hash Tables: Fast Lookup Magic

- Trees: Hierarchical Structure

- Graphs: Connected Worlds

- Comparison Chart

- Which Data Structure Should You Use?

- Conclusion

Why Data Structures Matter

Before diving into specific structures, let’s understand why they matter:

- Performance: The same operation can take nanoseconds or centuries depending on your data structure

- Memory efficiency: Some structures use memory wisely; others waste it spectacularly

- Code clarity: The right structure makes your code naturally express the problem

Consider this: searching for an element in an unsorted array takes O(n) time, but in a balanced binary search tree, it takes O(log n). For a million elements, that’s the difference between a million operations and twenty.

Array: The Foundation

The array is the most fundamental data structure in programming. It’s a contiguous block of memory that stores elements of the same type, accessible by their index.

How Arrays Work

When you declare an array, the computer allocates a fixed block of memory. Each element sits next to its neighbors—no gaps, no pointers. This is why arrays offer O(1) random access.

Code Example in Python

# Creating an array

numbers = [10, 20, 30, 40, 50]

# Accessing elements (O(1))

print(numbers[0]) # Output: 10

print(numbers[4]) # Output: 50

# Searching (O(n))

if 30 in numbers:

print("Found!")

# Accessing the last element

print(numbers[-1]) # Output: 50Code Example in C++

#include <iostream>

using namespace std;

int main() {

// Static array

int numbers[5] = {10, 20, 30, 40, 50};

// Accessing elements (O(1))

cout << numbers[0] << endl; // Output: 10

// Dynamic array (vector)

vector<int> dynamicNumbers = {10, 20, 30, 40, 50};

dynamicNumbers.push_back(60); // Add element at end

return 0;

}Time Complexity

| Operation | Time Complexity |

|---|---|

| Access by index | O(1) |

| Search | O(n) |

| Insert at end | O(1) amortized |

| Insert at middle | O(n) |

| Delete | O(n) |

When to Use Arrays

- When you need fast random access to elements

- When you know the exact size upfront

- When cache performance matters (arrays are cache-friendly)

Linked Lists: Dynamic Chains

Unlike arrays, linked lists store elements in nodes scattered across memory. Each node contains the data and a pointer (or reference) to the next node.

Types of Linked Lists

- Singly Linked List: Each node points only to the next node

- Doubly Linked List: Each node points to both next and previous

- Circular Linked List: The last node points back to the first

Code Example: Singly Linked List in Python

class Node:

def __init__(self, data):

self.data = data

self.next = None

class LinkedList:

def __init__(self):

self.head = None

def append(self, data):

new_node = Node(data)

if not self.head:

self.head = new_node

return

current = self.head

while current.next:

current = current.next

current.next = new_node

def display(self):

elements = []

current = self.head

while current:

elements.append(str(current.data))

current = current.next

print(" -> ".join(elements))

# Usage

ll = LinkedList()

ll.append(10)

ll.append(20)

ll.append(30)

ll.display() # Output: 10 -> 20 -> 30Time Complexity

| Operation | Time Complexity |

|---|---|

| Access by index | O(n) |

| Search | O(n) |

| Insert at head | O(1) |

| Insert at tail | O(n) without tail pointer |

| Delete at head | O(1) |

Why Linked Lists Matter

The key advantage of linked lists is O(1) insertion and deletion at known positions—no shifting required. They’re ideal when:

- You don’t know the total size upfront

- You’re frequently adding/removing elements at the beginning

- You need to maintain ordered collections with frequent modifications

Stacks: Last In, First Out

Stacks follow the LIFO (Last In, First Out) principle. Think of a stack of plates: you add to the top and remove from the top.

Real-World Applications

- Function call management: The call stack stores return addresses

- Undo mechanisms: Text editors use stacks to track actions

- Expression evaluation: Parsers use stacks for syntax analysis

- Browser history: Back button functionality

Code Example in Python

class Stack:

def __init__(self):

self.items = []

def push(self, item):

self.items.append(item)

def pop(self):

if self.is_empty():

raise IndexError("Stack is empty")

return self.items.pop()

def peek(self):

return self.items[-1] if self.items else None

def is_empty(self):

return len(self.items) == 0

def size(self):

return len(self.items)

# Usage

stack = Stack()

stack.push(10)

stack.push(20)

stack.push(30)

print(stack.pop()) # Output: 30

print(stack.peek()) # Output: 20Code Example: Balancing Parentheses

def is_balanced(expression):

stack = Stack()

pairs = {')': '(', ']': '[', '}': '{'}

for char in expression:

if char in '([{':

stack.push(char)

elif char in ')]}':

if stack.is_empty() or stack.pop() != pairs[char]:

return False

return stack.is_empty()

# Tests

print(is_balanced("({[]})")) # Output: True

print(is_balanced("([)]")) # Output: FalseTime Complexity

| Operation | Time Complexity |

|---|---|

| Push | O(1) |

| Pop | O(1) |

| Peek | O(1) |

Queues: First In, First Out

Queues follow FIFO (First In, First Out). Like a line at a coffee shop, the first person in is the first person served.

Types of Queues

- Simple Queue: Basic FIFO

- Circular Queue: Last position connects to first

- Priority Queue: Elements have priority levels

- Deque (Double-Ended Queue): Add/remove from both ends

Code Example in Python

from collections import deque

class Queue:

def __init__(self):

self.items = deque()

def enqueue(self, item):

self.items.append(item)

def dequeue(self):

if self.is_empty():

raise IndexError("Queue is empty")

return self.items.popleft()

def front(self):

return self.items[0] if self.items else None

def is_empty(self):

return len(self.items) == 0

def size(self):

return len(self.items)

# Usage

queue = Queue()

queue.enqueue("Alice")

queue.enqueue("Bob")

queue.enqueue("Charlie")

print(queue.dequeue()) # Output: Alice

print(queue.front()) # Output: BobCode Example: BFS Using a Queue

from collections import deque

def bfs(graph, start):

visited = set()

queue = Queue()

queue.enqueue(start)

visited.add(start)

while not queue.is_empty():

node = queue.dequeue()

print(f"Visiting: {node}")

for neighbor in graph.get(node, []):

if neighbor not in visited:

visited.add(neighbor)

queue.enqueue(neighbor)

# Example graph (adjacency list)

graph = {

'A': ['B', 'C'],

'B': ['A', 'D', 'E'],

'C': ['A', 'F'],

'D': ['B'],

'E': ['B', 'F'],

'F': ['C', 'E']

}

bfs(graph, 'A')Time Complexity

| Operation | Time Complexity |

|---|---|

| Enqueue | O(1) |

| Dequeue | O(1) |

| Front | O(1) |

Hash Tables: Fast Lookup Magic

Hash tables are the superheroes of data structures. They provide O(1) average-case lookup, insertion, and deletion by using a hash function to compute an index into an array.

How Hash Tables Work

- A hash function converts a key into a numeric index

- The value is stored at that index in the underlying array

- Collisions (two keys mapping to the same index) are handled via chaining or open addressing

Code Example in Python

# Using Python's built-in dictionary (hash table)

phonebook = {}

# Insert (O(1))

phonebook["Alice"] = "555-1234"

phonebook["Bob"] = "555-5678"

phonebook["Charlie"] = "555-9999"

# Lookup (O(1))

print(phonebook["Bob"]) # Output: 555-5678

# Check existence (O(1))

if "Alice" in phonebook:

print("Alice found!")

# Delete (O(1))

del phonebook["Charlie"]

# Iterate

for name, number in phonebook.items():

print(f"{name}: {number}")Code Example: Custom Hash Table Implementation

class HashTable:

def __init__(self, size=100):

self.size = size

self.table = [[] for _ in range(size)]

def _hash(self, key):

return hash(key) % self.size

def put(self, key, value):

index = self._hash(key)

bucket = self.table[index]

for i, (k, v) in enumerate(bucket):

if k == key:

bucket[i] = (key, value)

return

bucket.append((key, value))

def get(self, key):

index = self._hash(key)

bucket = self.table[index]

for k, v in bucket:

if k == key:

return v

return None

def delete(self, key):

index = self._hash(key)

bucket = self.table[index]

for i, (k, v) in enumerate(bucket):

if k == key:

del bucket[i]

return True

return False

# Usage

ht = HashTable()

ht.put("name", "Alice")

ht.put("age", 30)

print(ht.get("name")) # Output: AliceTime Complexity (Average Case)

| Operation | Time Complexity |

|---|---|

| Lookup | O(1) |

| Insert | O(1) |

| Delete | O(1) |

| Search | O(1) |

When Hash Tables Excel

- Fast lookups are critical

- You have key-value pairs (like dictionaries)

- You’re building a cache system

- You need to track unique values (using a set)

Trees: Hierarchical Structure

Trees are hierarchical data structures with a root node and child nodes. The most common is the Binary Search Tree (BST), where each node has at most two children, and left children are smaller than the parent.

Why Trees Matter

Trees power some of the most important data structures in computing:

- File systems: Directories and files

- Databases: Indexes for fast querying

- Compilers: Abstract Syntax Trees (AST)

- DOM: Web page structure

Code Example: Binary Search Tree in Python

class TreeNode:

def __init__(self, key):

self.key = key

self.left = None

self.right = None

class BST:

def __init__(self):

self.root = None

def insert(self, key):

self.root = self._insert_recursive(self.root, key)

def _insert_recursive(self, node, key):

if node is None:

return TreeNode(key)

if key < node.key:

node.left = self._insert_recursive(node.left, key)

else:

node.right = self._insert_recursive(node.right, key)

return node

def search(self, key):

return self._search_recursive(self.root, key)

def _search_recursive(self, node, key):

if node is None or node.key == key:

return node

if key < node.key:

return self._search_recursive(node.left, key)

return self._search_recursive(node.right, key)

def inorder(self):

result = []

self._inorder_recursive(self.root, result)

return result

def _inorder_recursive(self, node, result):

if node:

self._inorder_recursive(node.left, result)

result.append(node.key)

self._inorder_recursive(node.right, result)

# Usage

bst = BST()

for value in [50, 30, 70, 20, 40, 60, 80]:

bst.insert(value)

print(bst.inorder()) # Output: [20, 30, 40, 50, 60, 70, 80]

print(bst.search(40)) # Output: TreeNode with key=40Time Complexity (Balanced BST)

| Operation | Time Complexity |

|---|---|

| Search | O(log n) |

| Insert | O(log n) |

| Delete | O(log n) |

| Inorder Traversal | O(n) |

AVL Trees and Red-Black Trees

Standard BSTs can degrade to O(n) if elements are inserted in order. Self-balancing trees like AVL trees and Red-Black trees maintain O(log n) by automatically balancing during insertions.

Graphs: Connected Worlds

Graphs consist of vertices (nodes) connected by edges. They’re perfect for modeling relationships and networks: social connections, road maps, computer networks, and dependencies.

Types of Graphs

- Directed vs Undirected: Edges have direction or don’t

- Weighted vs Unweighted: Edges have weights or not

- Cyclic vs Acyclic: Contain cycles or don’t

- Sparse vs Dense: Few or many edges

Code Example: Adjacency List in Python

class Graph:

def __init__(self):

self.graph = {}

def add_vertex(self, vertex):

if vertex not in self.graph:

self.graph[vertex] = []

def add_edge(self, v1, v2, directed=False):

self.add_vertex(v1)

self.add_vertex(v2)

self.graph[v1].append(v2)

if not directed:

self.graph[v2].append(v1)

def display(self):

for vertex in self.graph:

print(f"{vertex} -> {self.graph[vertex]}")

# Usage: Building a social network

g = Graph()

g.add_edge("Alice", "Bob")

g.add_edge("Alice", "Charlie")

g.add_edge("Bob", "Diana")

g.add_edge("Charlie", "Diana")

g.display()

# Output:

# Alice -> ['Bob', 'Charlie']

# Bob -> ['Alice', 'Diana']

# Charlie -> ['Alice', 'Diana']

# Diana -> ['Bob', 'Charlie']Code Example: DFS and BFS

def dfs(graph, start, visited=None):

if visited is None:

visited = set()

visited.add(start)

print(f"Visiting: {start}")

for neighbor in graph.get(start, []):

if neighbor not in visited:

dfs(graph, neighbor, visited)

return visited

def bfs(graph, start):

visited = set()

queue = [start]

visited.add(start)

while queue:

vertex = queue.pop(0)

print(f"Visiting: {vertex}")

for neighbor in graph.get(vertex, []):

if neighbor not in visited:

visited.add(neighbor)

queue.append(neighbor)

# Example graph

graph = {

'A': ['B', 'C'],

'B': ['D', 'E'],

'C': ['F'],

'D': [],

'E': ['F'],

'F': []

}

print("DFS:")

dfs(graph, 'A')

print("\nBFS:")

bfs(graph, 'A')Graph Traversal Applications

- DFS: Finding paths, detecting cycles, topological sorting

- BFS: Shortest path (unweighted), level-order traversal, finding connected components

Time Complexity (Adjacency List)

| Operation | Time Complexity |

|---|---|

| Add Vertex | O(1) |

| Add Edge | O(1) |

| Remove Vertex | O(V + E) |

| Query Edge | O(V) worst case |

| Traversal | O(V + E) |

Comparison Chart

| Data Structure | Access | Search | Insert | Delete | Use When |

|---|---|---|---|---|---|

| Array | O(1) | O(n) | O(n) | O(n) | Fast access, fixed size |

| Linked List | O(n) | O(n) | O(1)* | O(1)* | Frequent insertions/deletions |

| Stack | O(n) | O(n) | O(1) | O(1) | LIFO workflows, undo features |

| Queue | O(n) | O(n) | O(1) | O(1) | FIFO workflows, scheduling |

| Hash Table | N/A | O(1) avg | O(1) avg | O(1) avg | Fast lookups, key-value data |

| BST (balanced) | O(log n) | O(log n) | O(log n) | O(log n) | Ordered data, range queries |

| Graph | O(V + E) | O(V + E) | O(1) | O(E) | Networks, relationships |

*At known position (head or tail)

Which Data Structure Should You Use?

Choosing the right data structure depends on your specific problem. Here’s a practical decision guide:

I need to store elements and access them by index

→ Use an Array (or dynamic array like Python’s list)

I need to frequently add/remove at the beginning

→ Use a Linked List or Deque

I need to process items in order of arrival

→ Use a Queue

I need to process items in reverse order

→ Use a Stack

I need fast lookups by key

→ Use a Hash Table

I need ordered data with range queries

→ Use a Balanced BST (like TreeSet in Java)

I need to model relationships/networks

→ Use a Graph

Conclusion

Data structures are the foundation of efficient programming. The key takeaways:

- Arrays offer O(1) access but expensive insertions

- Linked Lists excel at O(1) insertions at known positions

- Stacks and Queues model LIFO and FIFO workflows elegantly

- Hash Tables provide near-instantaneous lookups

- Trees maintain order with O(log n) operations

- Graphs model complex relationships and networks

Master these fundamentals, and you’ll write better code—whether you’re solving competitive programming challenges, preparing for technical interviews, or building production systems.

In future posts, we’ll dive deeper into advanced data structures like Heaps, Tries, and Segment Trees, as well as algorithmic techniques that combine these structures for powerful problem-solving.

If you found this guide helpful, share it with your fellow developers. See you in the next post!